The Unasked Question: Part 5

Is the Federation Possible?

This series has spent four essays mapping a problem. The circular investment loops. The collapsing economic foundations. The warnings we encoded in fiction and failed to heed. The systematic removal of the governance friction that might have redirected the trajectory.

It would be easy, at this point, to arrive at despair. The incentive map is unfavorable. The window is narrowing. The people with the most power over the outcome have the least structural motivation to use it wisely.

But despair is also a kind of intellectual surrender — a way of feeling clear-eyed while actually stopping short of the hardest question. So let's ask it directly.

Is there a version of this story that ends well?

The honest answer is yes. But it requires understanding what "ends well" actually means — and what the historical conditions for getting there look like.

The model I keep returning to is not utopian fiction. It is the Star Trek universe, specifically the Federation, and not for the reasons people usually cite.

The Federation is not interesting because it depicts nice people with good technology. It is interesting because the canonical history of how it came to exist is, if you pay attention to it, a story about catastrophe as the precondition for transformation.

In that history, humanity went through World War III — a devastating conflict involving nuclear and biological weapons, massive casualties, the collapse of significant portions of global civilization. It was not the technology of first contact with the Vulcans that created the Federation's values. It was the experience of having nearly destroyed itself that forced a genuine reckoning with what human civilization was actually for.

The Vulcans didn't arrive to a peaceful, enlightened humanity. They arrived to a humanity that had just barely survived its own worst impulses and was, for the first time, genuinely motivated to be different.

This is not a comfortable model. It suggests that the transformation we need may require the crisis we are trying to avoid. But it is also, if you look at actual history rather than preferred narratives, a more honest account of how civilizational change actually happens.

Let's steelman the optimistic case, because it deserves more than dismissal.

Technology does follow S-curves. Exponential growth phases plateau into infrastructure. Electricity was once accessible only to institutions and the wealthy — it became a utility so mundane that people flip switches without thinking. The internet went from military research network to luxury service to invisible infrastructure in roughly forty years. Mobile computing followed a similar arc.

There is a plausible scenario in which AI follows this curve. Models become commoditized. Inference costs drop to near zero. The open source movement — which is already producing capable models outside the control of any single corporation — continues to develop and eventually produces tools accessible to individuals and small organizations at costs that democratize rather than concentrate capability.

In this scenario, the small business that today cannot afford enterprise AI tooling gets access to the same capability in five years that today requires Fortune 500 infrastructure budgets. The individual researcher, the independent journalist, the community organizer, the small clinic in a rural area — all of them gain leverage that partially compensates for the leverage being accumulated at the top.

This is not fantasy. It happened with computing. It happened with the internet. The question is whether it happens fast enough, and with sufficient distribution of genuine capability rather than just access to a metered service owned by someone else.

The replicator analogy from earlier in this series is worth returning to here, because it points at what the optimistic scenario actually requires.

In Star Trek, the technology that matters most is not the starships or the weapons or even the transporters. It is the replicator — the ability to produce any physical good from energy at effectively zero marginal cost. The replicator is what makes the Federation's economics possible. When any material thing can be produced by anyone at any time at no meaningful cost, the logic of accumulation collapses. There is nothing to hoard. Scarcity, the foundation of every economic system humans have ever built, simply ceases to apply to physical goods.

AI plus robotics is the closest real-world analog to the replicator that has ever existed. The potential is genuinely there — a system that can produce cognitive and physical output at scale, at low cost, available to anyone with access to the infrastructure.

The question is who owns the infrastructure.

In the Federation, the infrastructure is held in common. Nobody owns the replicator network. It is public utility in the most fundamental sense — a shared resource that serves the collective because it is governed by the collective.

In the scenario we are currently building, the infrastructure is held by a small number of private entities whose obligation is to shareholders, not to the collective. The replicator exists, but it is a subscription service. The abundance is real, but access to it is metered and priced and controlled by people whose interests are not yours.

Whether we get the Federation or the Axiom depends, more than anything else, on that single question of ownership and governance.

What gives me genuine, non-naive reason for cautious optimism?

Several things, actually.

The awareness of the problem is deeper and broader than institutions are reflecting. The conversation happening in places like this — outside the academic conferences, outside the corporate ethics teams, outside the policy papers — is more sophisticated than the official discourse suggests. People are connecting the dots. The understanding that something fundamental is at stake is not confined to specialists.

The open source AI movement is real and it is motivated, in significant part, by exactly the concerns this series has been mapping. The people building and releasing capable models outside of corporate control are not naive about what they're doing. They understand that the alternative to distributed capability is concentrated capability, and they are choosing a different path with their labor and their time.

The legal and political friction that remains is more durable than it appears. Courts move slowly but they move. State governments in California, New York, and Colorado have explicitly refused to abandon their regulatory frameworks despite federal pressure. The international governance conversation, thin as it currently is, exists and is developing. These are not sufficient. They are not nothing.

And there is the simple fact that the people driving this transition are not monolithic. There are genuine disagreements inside every major AI company about pace, governance, and responsibility. There are researchers and engineers who are troubled by what they are building and are looking for ways to build it differently. The official positions of institutions do not always reflect the actual distribution of opinion within them.

But the honest answer to "is the Federation possible?" is: yes, and narrowly, and only if.

Only if the productivity gains from automation get distributed rather than concentrated — which requires political will that does not currently exist but could be generated.

Only if the open source and commons-based alternatives to corporate AI infrastructure develop fast enough and become accessible enough to prevent permanent monopolization of the capability.

Only if the governance frameworks that are currently being dismantled get rebuilt — faster, more technically literate, more internationally coordinated than anything that has existed before.

Only if enough people who understand the shape of the problem find ways to make that understanding consequential — in their communities, in their politics, in their professional choices, in what they build and what they refuse to build.

None of these are impossible. All of them require choosing them deliberately, because nothing about the current trajectory produces them automatically.

The Federation didn't happen because technology got good enough. It happened because humanity looked directly at what its worst impulses had produced and decided — collectively, painfully, imperfectly — that a different future was worth building.

We don't have to wait for the catastrophe to make that decision. The lines are visible. The direction of travel is clear. The destination is not fixed.

The unasked question — the one this series has been circling from the beginning — is not whether we can build this. We demonstrably can. It is not even whether we will. We demonstrably are.

The question is whether, somewhere in the accumulated momentum of a million individually rational decisions, there is still room for a choice. A genuine, collective, eyes-open choice about what we are building and who it serves and what we are willing to pay to get something different.

I think there is. I think the window is narrower than most people realize and wider than the despair suggests.

And I think the people most likely to keep it open are the ones paying attention from the ground level — not the boardrooms, not the research labs, not the policy conferences — but the people watching the world change from where they actually live, connecting the dots that the official discourse keeps failing to connect, and refusing to mistake the absence of a villain for the absence of a problem.

The Laughing Man wanted the truth to matter. It still can.

But only if enough people decide it should.

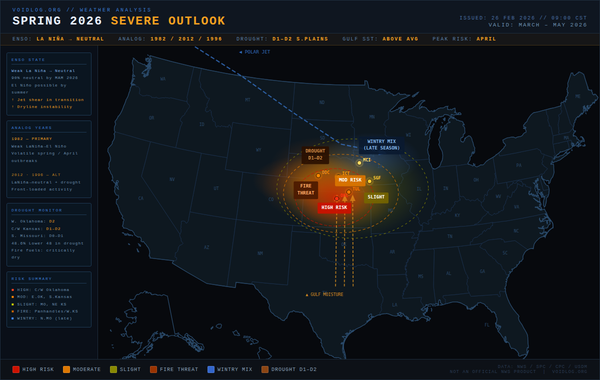

This concludes The Unasked Question, a five-part series on AI, society, and the choices we are still — barely — in a position to make. New writing on technology, weather, and observations from the ground level continues at Voidlog.